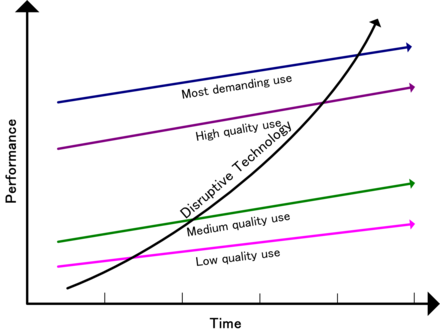

Clay Christensen, a renowned scholar, introduced the theory of disruptive technology to explain how new innovations can upend established industries. According to Christensen, disruptive technologies initially emerge in niche markets, often overlooked by major players because they are not as profitable or sophisticated. However, these technologies gradually improve and begin to attract mainstream customers, eventually displacing the dominant technologies and companies.

Generative AI fits perfectly into Christensen’s model. Initially, it was seen as a novelty, used for creating deepfakes or enhancing photos. But as the technology has improved, its potential applications in the film and video industries have become apparent.

To test where AI currently stands in it’s disruptive potential I did an experiment. I found Budweiser’s famous Old School Delivery Superbowl ad and tried to recreate it shot by shot and audio clip by audio clip to see how close I could get it. The result shows both the potential and limitations of the current technology in the realm of commercials.

Television commercials have the highest production values of any video media so it was a high bar. Good commercials are also successful in conveying an emotional story in a very short time using refined and skillful storytelling elements. The performance of the actors is also key and this is obviously something that AI currently struggles to replicate. In addition, a good commercial often uses popular songs and images. This ad uses The Band’s famous hit, The Weight. To keep the experiment pure (or as pure as possible) I used an AI songwriting app that was pretty amazing but it’s hard to compete with a classic tune like that.

Generative AI is not just a technological advancement; it is a disruptive force that echoes Clay Christensen’s theory. As it continues to evolve, it will challenge traditional models in the film and video industries, offering new possibilities for creativity and efficiency. TV commercials are just the beginning—this technology promises to reshape how we create and consume visual content, heralding a new era of innovation and disruption.

The Results

Here is the original ad that was shown during this year’s game.

Here is the AI version.

The Process I used

I used a number of AI tools to accomplish the test. The whole project took about 6 hours to do and involved the following steps:

I found the ad here using Perplexity. I dropped it into Final Cut Pro to use as a storyboard. I then took a screencap of the first image and used ChatGPT to generate a description.

It came back with:

“The image depicts a snowy landscape featuring a small village or town nestled in a valley. Snow blankets the ground, rooftops, and surrounding trees, creating a picturesque winter scene. The village is surrounded by forested hills or mountains, also covered in snow, adding to the serene and quiet atmosphere. The winding roads and railway tracks are partially visible, suggesting limited traffic or movement due to the snow. The overall mood of the image is calm and tranquil, evoking a sense of peaceful isolation typical of a winter countryside setting.”

chatGPT

Next, I used the description to generate and image in Midjourney and then with Runway using the director settings, made it into a video clip that slowly zoomed in. I then took the lyrics from the song “The Weight” by The Band and had ChatGPT write a similar set of lyrics. I fed these new lyrics into Udio to generate a ‘bluesy’ number, and it came back with two tracks, both surprisingly good.

I repeated the process of capturing, describing, and rendering each shot. I experimented with a number of video generation tools that I’ll discuss in more depth in another post.

For the speaking parts of the commercial, I generated characters and used RunwayML to animate them and add lip syncing. The audio was created using ElevenLabs. Creating emotionally compelling dialogue using AI remains one of the greatest challenges.

Once I assembled all the clips, I moved on to audio design, using the AI-generated song as a substitute. It’s hard to compete with such a classic hit. I then used Epidemic Sound as a source for foley and sound effects to fill in the audio. Keep in mind that video generation at this point is strictly visual, so everything you hear has to be found elsewhere and added in.

I’ll be doing more of these experiments over the coming weeks. Let me know what you think.